Computer Adaptive Testing (CAT) tailors question difficulty in real time, delivering faster, more precise scores than fixed tests. It reduces fatigue, strengthens security, and aligns tightly with blueprints for fair decisions in hiring, certification, placement, and learning. This guide is vendor-neutral and practical.

Computer Adaptive Test Meaning

A computer adaptive test (CAT) is a computer-based exam that adjusts the difficulty of each subsequent question in real time based on how you answered previous ones. This personalization means fewer questions can measure your ability more precisely than a fixed, one-size-fits-all test.

Why does CAT exist?

- Accuracy: Zeros in on your actual skill level faster.

- Efficiency: Reaches a precise score with fewer items, reducing test time and fatigue.

- Better experience: Questions feel appropriately challenging—not too easy, not impossibly hard.

- Security: Spreads exposure across a large item bank, reducing overuse and predictability.

Computer Adaptive Testing Definition

A computer adaptive test (CAT) is an assessment that estimates a test-taker’s latent ability θ and dynamically selects items from a calibrated bank to minimize measurement error at that estimate. Item parameters (e.g., difficulty b, discrimination a, and sometimes guessing c) come from Item Response Theory (IRT) models. The test continues until stopping rules (e.g., target standard error or item/time limits) are met, producing a score and precision index.

Key Components:

- Starting ability estimate (θ₀): Initial guess for ability—set by a default prior, short locator test, or background data.

- Item selection algorithm: Chooses the following item that maximizes information at current θ while honoring blueprint/content constraints.

- Exposure control: Techniques (e.g., randomization, caps) that prevent overuse of popular items and reduce item compromise.

- Scoring & stopping rules: Ability is updated after each response (e.g., MLE, MAP/EAP estimators); the test stops when precision reaches a target (e.g., SE(θ) ≤ threshold) or when administrative limits are reached.

How a Computer Adaptive Test Works (Step-by-Step)

Computer Adaptive Testing follows a predictable lifecycle: design, delivery, and decision. You calibrate items and rules, adapt questions in real time as examinees respond, then stop when precision is reached—reporting scores, evidence, and analytics for continuous improvement.

Before the test

To enable real-time adaptation, you must first engineer the ecosystem—calibrated item bank, blueprint constraints, selection/exposure rules, and stopping criteria—validated through simulations and pilots.

- Build a calibrated item bank: Each question has IRT parameters (difficulty, discrimination, guessing) and metadata (topic, cognitive level).

- Set blueprint & constraints: Minimum/maximum items per domain, enemy items, content balance, language, and time rules.

- Choose algorithms: Starting θ, estimator (MLE/MAP/EAP), item selection (max information, content-balanced), and exposure control.

- Define stopping rules: Target SE(θ), max items, and time limits; set pass/fail (if applicable).

- Run simulations/pilots: Validate precision, fairness (e.g., DIF monitoring), and security before launch.

During the test

Once guardrails are set, the engine operationalizes them: starting from an initial ability estimate, it selects the most informative item, updates ability after each response, and continuously balances content and security.

- Start with θ₀: A default prior, brief locator, or background data seeds the initial ability estimate.

- Deliver an item that fits θ: The engine selects a question with high information while honoring blueprint and exposure limits.

- Score in real time: After each response, update θ and its standard error using the chosen estimator.

- Adapt difficulty: If you answer correctly, the following item tends to be harder; if incorrect, easier—always within content constraints.

- Protect the bank: Randomization and caps limit overuse; security and proctoring rules apply (especially online).

When the test ends

Adaptation continues until precision or administrative limits are met; then the system finalizes the score, documents uncertainty, and compiles operational analytics for quality, fairness, and security reviews.

- Precision reached: Stop when SE(θ) meets the target, or when max items/time is reached.

- Report results: Provide score (θ or scaled), precision/SE, and—if allowed—domain subscores and decision classifications.

- Log analytics: Item exposure, pathing, and fit statistics feed future reviews and recalibration cycles.

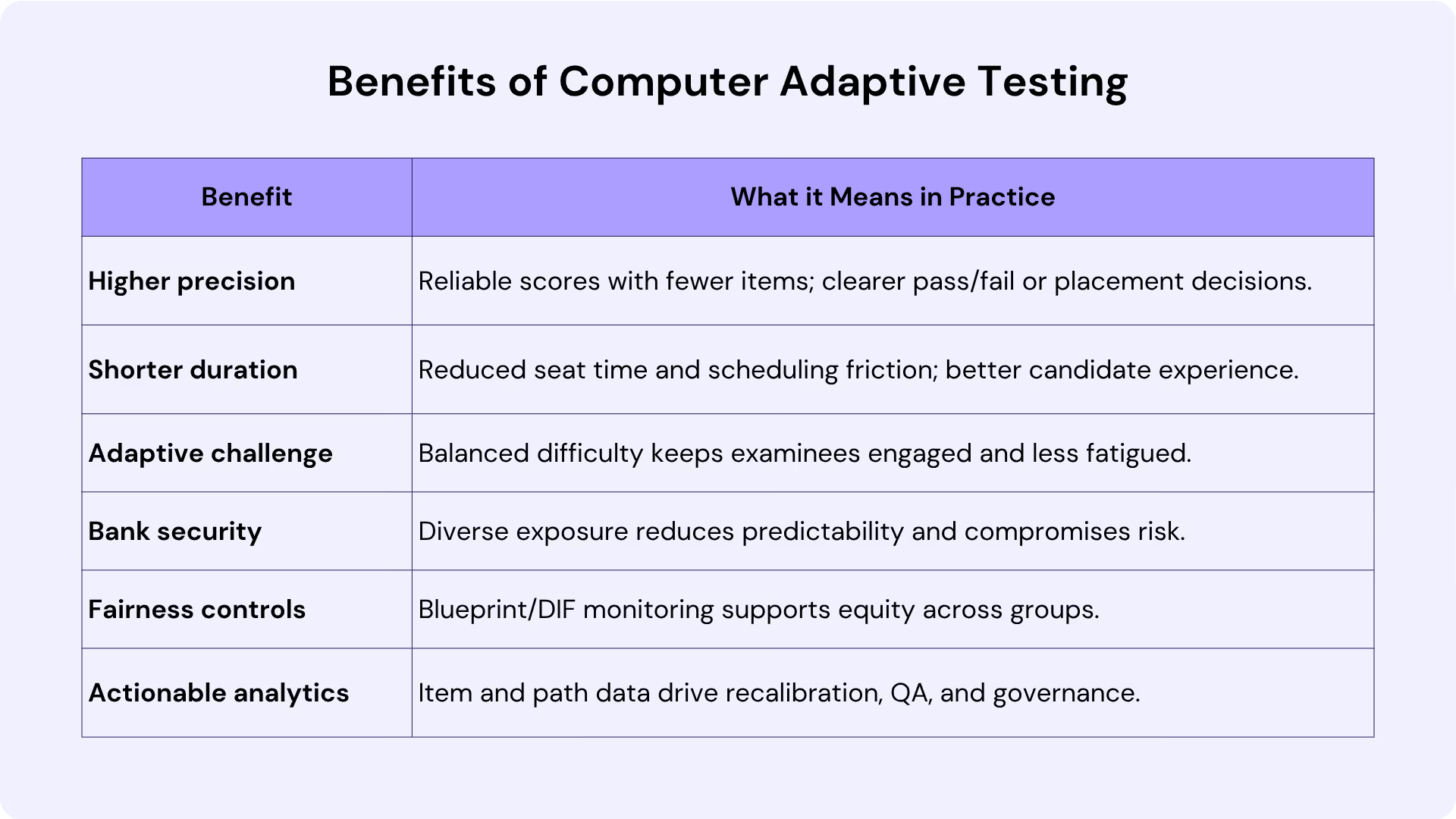

Benefits of Computer Adaptive Testing

A well-designed CAT delivers faster, more precise measurement with a better test-taker experience. By targeting item difficulty to each person’s performance, organizations gain reliable decisions with fewer items and stronger security.

Disadvantages & Risks (Be Transparent)

CAT isn’t a universal upgrade. It demands careful design, robust item banks, and rigorous governance. Before embracing CAT, acknowledge the trade-offs that come with adaptive delivery. The gains in precision and experience depend on rigorous foundations—content, psychometrics, and operations. Here’s what commonly goes wrong and how to plan safeguards.

Large, Well-Calibrated Item Bank Required

CAT relies on a sufficiently large, well-calibrated item bank that covers the full range of abilities and all blueprint domains. Developing such a bank takes substantial time and cost: items must be written, reviewed, pretested, and calibrated with IRT to ensure stable parameters. If the bank is thin—especially at extreme difficulty levels—precision suffers, item paths converge, and exposure risk increases.

Algorithm & Validation Complexity

Designing the engine involves selecting a sensible starting ability estimate, choosing an estimator (MLE, MAP, or EAP), and defining an item selection strategy that balances information, content constraints, and exposure limits. Each decision has trade-offs that require psychometric expertise. Robust simulations, pilot studies, and ongoing validation against precision targets and fairness standards are essential to preserve credibility over time.

Content Balance & Subscores

Without strong blueprint constraints, adaptive delivery can inadvertently undersample certain content areas, leading to stakeholder concerns and weak subscores. When subscores are required, each domain needs enough items to achieve acceptable reliability. This often increases development demands and may lengthen tests if not carefully optimized.

Fairness, Bias & Accessibility

Fairness must be continually monitored. Differential item functioning (DIF) analyses help identify items that behave differently for comparable groups, and accessibility requirements must be engineered into the adaptive flow. Screen readers, alternative input methods, time accommodations, and language support need dedicated testing so that adaptation does not conflict with assistive technologies or create unintended barriers.

Operational & Technical Dependencies

CAT implementations, particularly at scale or in remote settings, depend on stable connectivity, secure browsers, and reliable proctoring workflows. Security operations—such as bank protection, logging, and incident response—must be mature and well-documented. Interruptions or weak controls can degrade examinee experience and threaten test validity.

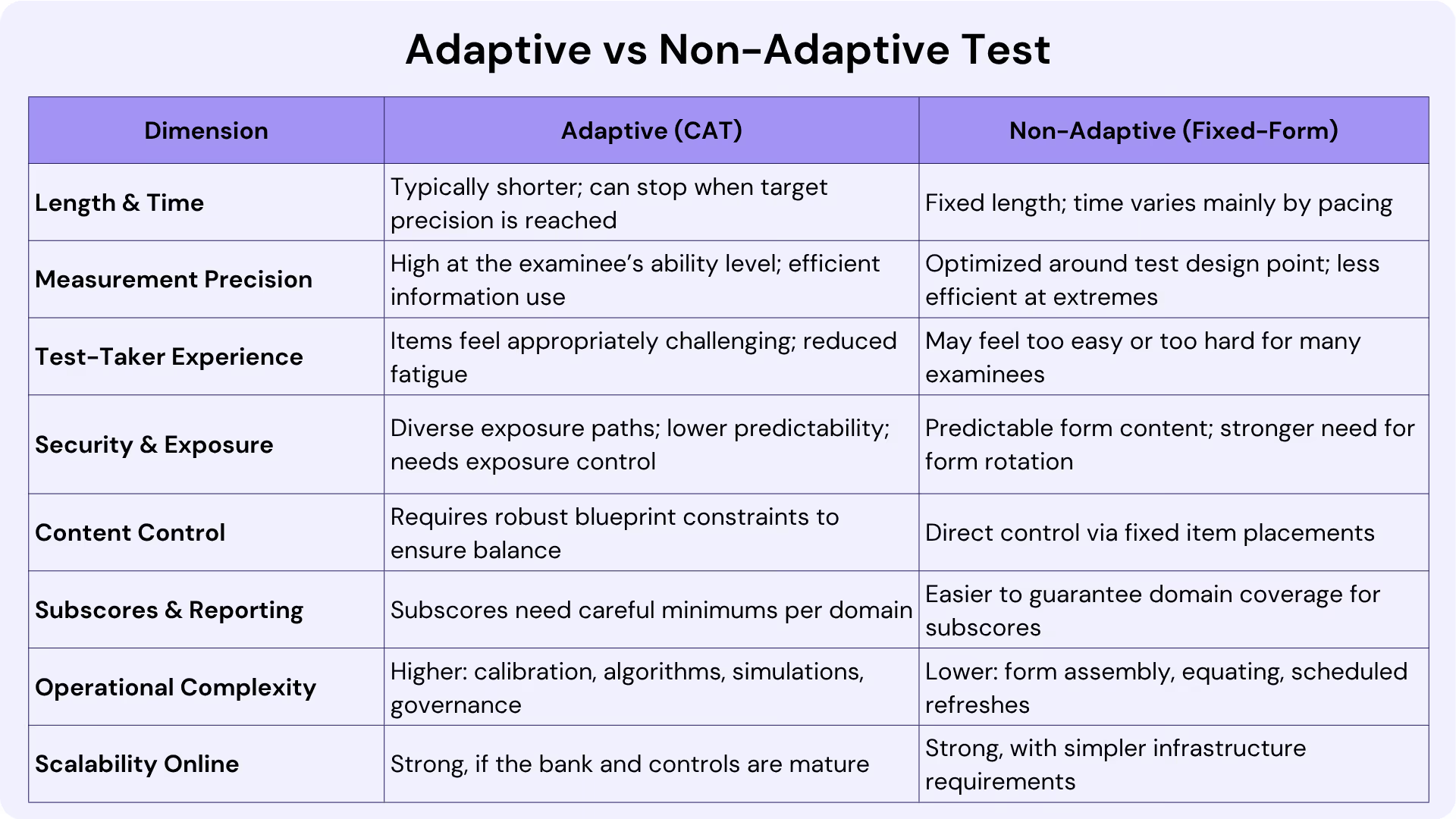

Adaptive vs Non-Adaptive Test

Adaptive and fixed-form tests measure the same constructs but operate very differently. CAT personalizes item difficulty in real time, while non-adaptive forms present a pre-set sequence. Understanding their trade-offs helps match assessment design to program goals.

Side-by-Side Comparison:

Choose CAT when you need efficient, precise decisions (e.g., placement, pass/fail, hiring screens) and can invest in calibrated item banks, simulations, and governance. It shines with wide ability ranges, frequent testing, and strong item security needs.

Choose fixed-form when subscores and uniform content coverage are paramount, banks are small, or stakeholders require identical exposure. Many programs adopt hybrid models—fixed sections for essential content balance, followed by adaptive segments for precision and time savings.

Computer Adaptive Test Online For Organizations and Employers

Delivering CAT online blends adaptive measurement with secure, scalable delivery. HR, L&D, and credentialing teams get remote-friendly assessments that personalize difficulty in real time while enforcing identity checks, proctoring, and strict item-exposure controls.

What “CAT online” entails for recruiters

An online CAT platform authenticates candidates, locks down the test environment, and streams adaptive items with low latency. Proctoring (live or AI-assisted), secure browsers, session logging, and exposure caps protect integrity while content constraints maintain blueprint balance.

- Implementation checklist

Define a defensible blueprint, build and pretest items, then calibrate with IRT. Configure starting ability, estimator, item selection, exposure controls, and stopping rules. Validate with simulations and pilots, then finalize accessibility, data privacy, and anti-cheating measures before scaling.

- Vendor-neutral tips for success

Center governance with versioning, change control, and audit trails. Refresh overexposed items, recalibrate regularly, and monitor fairness via DIF and subgroup analytics. Pair strong identity verification with layered proctoring and use pathing/exposure data to guide continuous bank growth.

Types of adaptive tests for hiring

Adaptive hiring assessments span cognitive ability (reasoning, problem-solving), skills-based tests (coding, data analysis, writing), and role/industry batteries (sales, customer support, finance). Each adapts item difficulty to a candidate’s responses, delivering precise pass/fail or rank-order decisions with fewer questions and tighter test security.

Conclusion

Computer Adaptive Testing pairs psychometric rigor with operational efficiency, delivering precise scores in less time while improving candidate experience and protecting item banks. It isn’t universal—strong item development, validation, fairness monitoring, and governance are essential.

For organizations and employers, a well-run online CAT—backed by calibrated items, exposure controls, and layered proctoring—can scale hiring and learning decisions confidently. Start small with simulations and pilots, refine blueprints and algorithms, then expand your bank and analytics to sustain reliability, fairness, and security over time.