Compare Codility alternatives offering deeper skills insights, modern developer assessments, and more flexible workflows. This guide helps hiring teams choose platforms aligned with today’s fast-moving technical hiring needs.

69% of companies struggle to assess technical skills reliably, according to LinkedIn, driving demand for coding simulations, role-based tasks, and science-backed evaluation tools that improve hiring outcomes.

Codility is widely used for coding assessments and developer screening, but many organizations now seek alternatives offering richer simulations, adaptive testing, or more flexible pricing. This guide highlights platforms that improve technical evaluation, enhance candidate experience, and support a scalable, skills-first hiring strategy across engineering, product, and data roles.

iMocha is rated 4.4 out of 5 on G2 for their AI-powered skills assessment platform. They offer 2,500+ tests across technical, digital, cognitive, and language skills. Its AI-driven Skills Intelligence identifies skill gaps, benchmarks candidates, and supports hiring and upskilling decisions. iMocha includes coding simulators, project-based evaluations, proctored assessments, and role-based test templates. Designed for global teams, it enables enterprise-scale skill measurement across engineering, data, cloud, cybersecurity, and functional roles.

Ideal for organizations seeking comprehensive technical and digital skill validation with AI-backed benchmarking helping teams evaluate readiness across modern tech stacks, emerging skill areas, and enterprise-wide digital roles.

Xobin is an AI-powered talent assessment platform rated 4.7 out of 5 on G2, trusted by 5,000+ companies across 60+ countries, including several Fortune 500 organizations. The platform offers 3,400+ skill-based and 2,500+ role-based assessments and a library of 180,000+ validated questions covering technical, cognitive, behavioral, and domain-specific competencies. The platform combines coding assessments, AI-driven video interviews, psychometric testing, and advanced proctoring within a single platform. With recruitment automation, collaborative hiring tools, and AI-powered evaluation, Xobin helps organizations hire faster while maintaining fairness, accuracy, and test integrity across global hiring workflows.

Xobin is ideal for organizations that want a skills-first hiring platform combining assessments, AI interviews, coding simulators, and secure proctoring. It enables recruiters and hiring teams to evaluate candidates objectively, reduce hiring bias, and scale recruitment efficiently across technical and non-technical roles.

DevSkiller is a technical skills assessment platform powered by RealLifeTesting™, a methodology that evaluates developers using practical, project-based tasks mirroring real work environments. It supports coding interviews, automated scoring, and assessments aligned to modern tech stacks, including backend, frontend, data, cloud, and DevOps roles. With TalentScore, DevSkiller enables organizations to benchmark skills, personalize tests, and predict role readiness more accurately across engineering teams of all sizes.

A powerful option for engineering teams needing deeper, real-world technical evaluation not just algorithmic tests helping assess practical capability, code quality, and problem-solving approaches relevant to actual on-the-job performance.

Woven is a technical hiring platform that evaluates engineering candidates using work-sample–style scenarios such as debugging tasks, systems design challenges, and asynchronous technical walkthroughs. Instead of algorithm puzzles, Woven focuses on real-world engineering competencies including problem decomposition, communication, collaboration, and on-call readiness. Human review combined with structured scoring provides deeper insight into how candidates perform in practical situations, making it valuable for evaluating mid-level and senior engineers.

A strong choice for organizations wanting deeper, context-rich evaluation of real engineering skills ideal when communication, debugging ability, and architectural thinking matter more than solving algorithm puzzles.

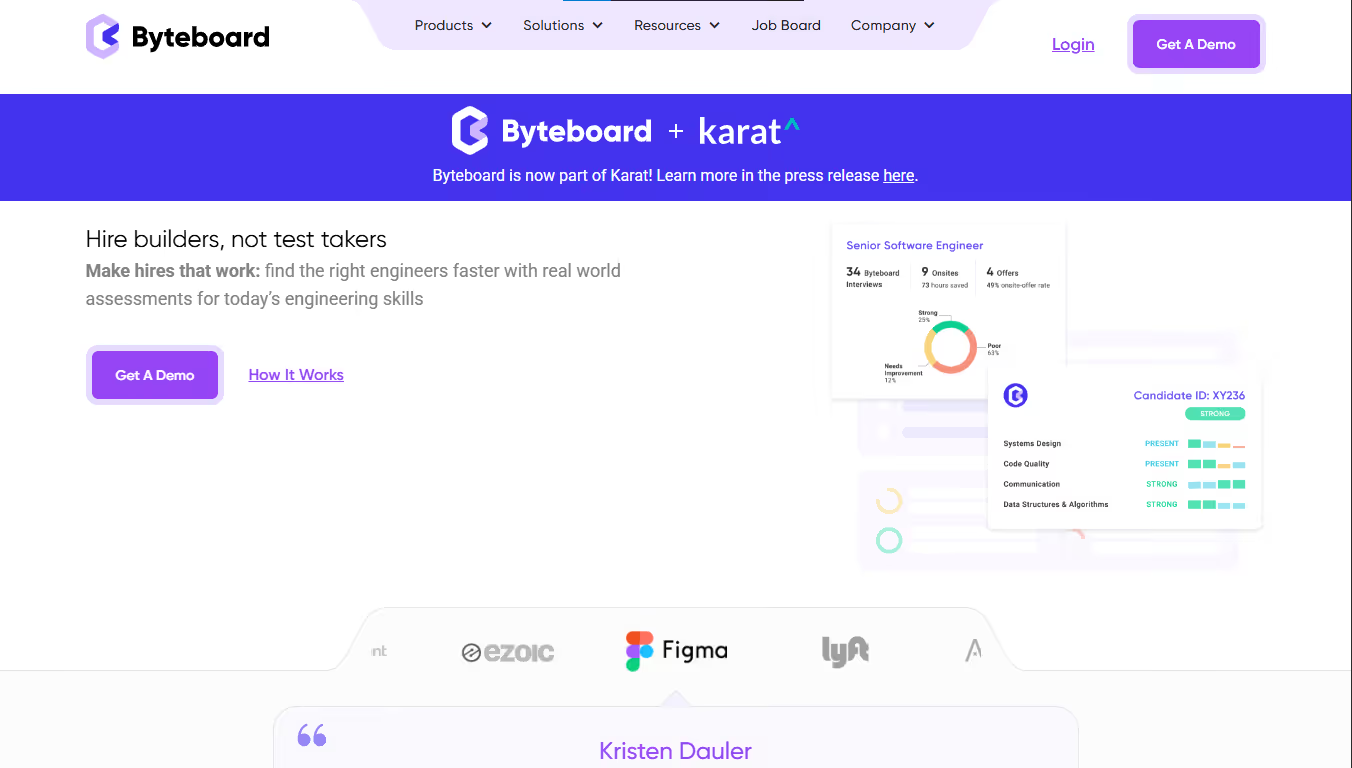

Byteboard is a project-based technical assessment platform designed to measure engineering ability through real-world tasks rather than algorithmic puzzles. Backed by Google, Byteboard evaluates skills like systems design, debugging, code comprehension, and communication through structured work simulations. Its rubric-based human evaluation removes guesswork, offering detailed, competency-aligned insights. Byteboard aims to provide a more inclusive, equitable, and job-relevant assessment experience for software engineering roles at all levels.

Ideal for organizations wanting a more inclusive, accurate, and realistic measure of engineering skill especially when communication, design thinking, and practical code understanding matter more than solving timed puzzles.

Equip holds a 4.8/5 rating on G2 and focuses on structured interviews and hiring intelligence workflows. It is designed to improve interview consistency and reduce bias through standardized scorecards and analytics. While highly rated for usability, it is not built as a deep technical coding simulation platform.

Engineering-led teams often transition to platforms combining structured technical simulations with behavioral and cognitive performance insights in one ecosystem.

WeCP holds a 4.7/5 rating on G2 and specializes in skill-based technical simulations for engineering and digital roles. It offers customizable coding environments and AI-assisted grading workflows. Users value its simulation realism, though broader behavioral and cognitive benchmarking capabilities remain limited.

Organizations seeking deeper predictive hiring accuracy often adopt solutions integrating technical performance with structured behavioral and cognitive assessment frameworks.

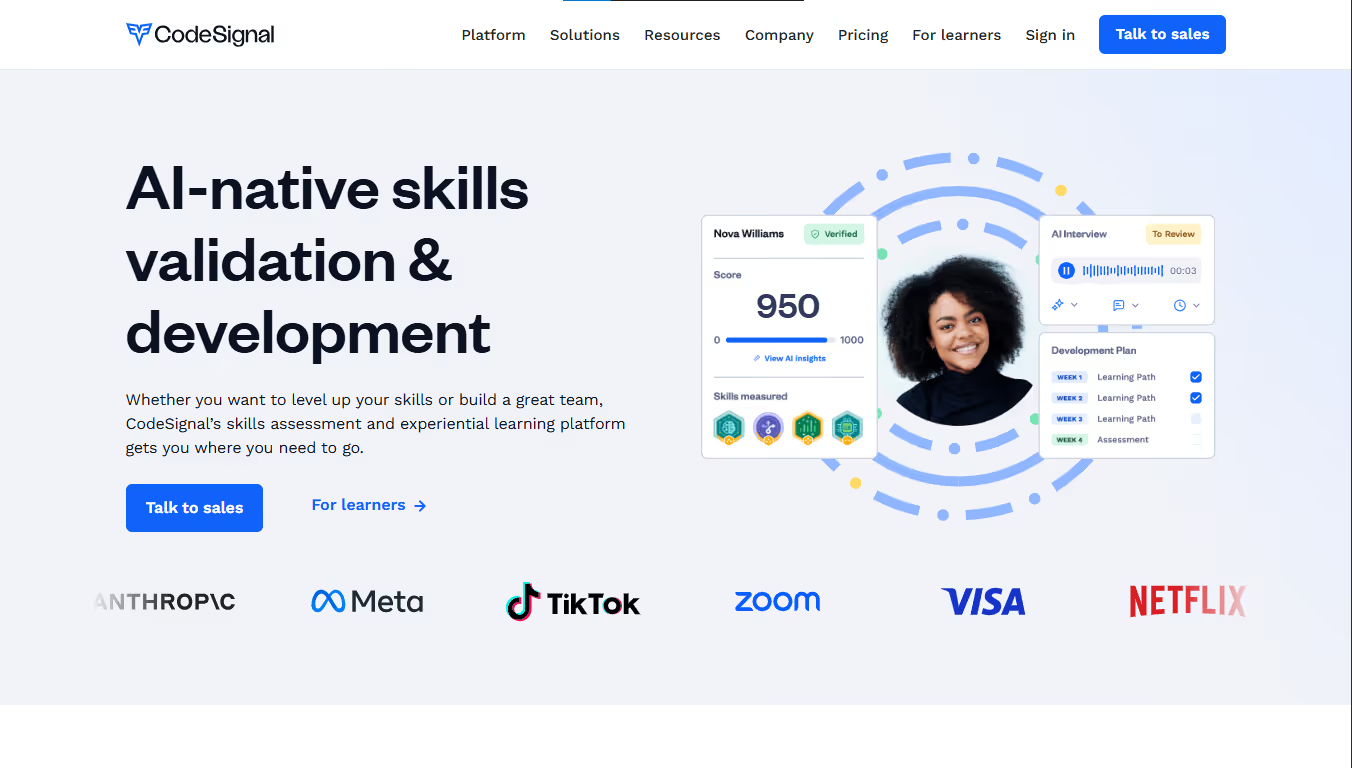

CodeSignal is a technical hiring platform focused on validating real-world engineering ability through skills-based tasks, IDE-powered coding environments, and role-specific assessments. Its Certified Evaluations, developed with industry experts, provide standardized, predictive benchmarks for software engineering, data, and DevOps roles. With advanced proctoring, AI scoring assistance, and deep analytics, CodeSignal helps organizations run fair, consistent, and scalable evaluations across large technical hiring pipelines.

Ideal for teams wanting standardized, predictive, and scalable technical assessments especially when evaluating engineering ability across multiple roles with consistency and validity.

CodinGame for Work is a gamified developer assessment platform that evaluates programming skill through immersive coding games, AI challenges, and real-world technical tasks. Designed to boost candidate engagement, it tests logic, problem-solving, and coding proficiency in an interactive environment. CodinGame supports 25+ languages and includes customizable tests, automated scoring, multiplayer coding battles, and advanced anti-cheating tools, making it useful for both hiring and employer-branding–driven technical recruitment.

Perfect for organizations wanting interactive, high-engagement coding assessments that help attract developers and differentiate their hiring process with gamified, skills-focused evaluation.

Choosing the right Codility alternative depends on whether you need deeper simulations, real-world tasks, or broader role coverage. Compare platforms on assessment validity, customization, analytics depth, proctoring strength, and pricing. Select a solution that improves prediction accuracy, enhances candidate experience, and aligns with your technical hiring volume and skills strategy.

Ensure coding assessments mirror real production tasks rather than abstract algorithm puzzles, aligning evaluation criteria directly with day-to-day engineering responsibilities and collaboration needs.

Coding accuracy alone does not predict retention or teamwork. Choose platforms integrating behavioral, cognitive, and communication insights alongside technical simulations.

Automated grading should be explainable. Demand clarity on scoring models, plagiarism detection logic, bias mitigation processes, and how results influence shortlist decisions.

Verify encrypted coding environments, IP protection safeguards, secure browser controls, role-based access permissions, audit trails, SOC 2 or ISO certifications, and global data privacy compliance.

Confirm seamless ATS integration, automated assessment triggers, API flexibility, bulk candidate processing, and centralized reporting dashboards supporting high-volume engineering hiring.

Reports must translate coding performance into structured interview probes, collaboration indicators, and performance risk summaries that engineering leaders can confidently apply

Codility is a strong technical assessment platform, but alternatives may offer deeper simulations, more flexible pricing, or stronger real-world evaluation. Compare options based on assessment depth, candidate experience, analytics, and scalability. The right platform should strengthen prediction accuracy, reduce bias, and align with your team’s evolving technical hiring needs.

Start by defining the skills you need to evaluate algorithmic ability, full-stack proficiency, debugging, or systems thinking. Compare platforms on realism, reporting depth, proctoring strength, and workflow fit. The right tool should reflect your team’s technical expectations and hiring volume.

Project tasks mimic real engineering work and often provide better insight into problem decomposition, communication, and code quality. Standard coding tests still add value, but combining both typically delivers stronger prediction accuracy and reduces false positives.

Shorter, role-aligned tasks improve completion rates. Offer clear instructions, avoid overlong challenges, and choose platforms with intuitive interfaces. Simulations that feel realistic, not gimmicky, help candidates stay engaged and show their true abilities.

Ensuring role profiles and expectations are aligned with the new assessment format. Teams often need a short calibration period to adjust scoring, customize tasks, and train interviewers to interpret new types of evaluation data.

They improve fairness when used transparently. Share expectations upfront and choose platforms offering gentle, non-intrusive monitoring. When candidates understand purpose and boundaries, proctoring rarely hurts experience and greatly increases trust in your process.

They can when the tasks reflect real job demands and include debugging, architecture reasoning, and communication checks. Combining coding tests with behavioral signals or technical interviews often produces the most accurate, balanced hiring decisions.

Need support? Fill out the form and we'll get back to you shortly.